共计 3998 个字符,预计需要花费 10 分钟才能阅读完成。

Tensorflow 自己定义的一套数据结构

张量是具有统一类型(称为 dtype)的多维数组。您可以在 [tf.dtypes.DType](<https://www.tensorflow.org/api_docs/python/tf/dtypes/DType?hl=zh-cn>) 中查看所有支持的 dtypes。

张量这个数据结构包含了三个维度的数据:

name

shape

dtype

如果您熟悉 NumPy,就会知道张量与 np.arrays 有一定的相似性。

就像 Python 数值和字符串一样,所有张量都是不可变的:永远无法更新张量的内容,只能创建新的张量。

张量不可变但是可以引用 比如 a=b 这样的操作

>>> a=tf.constant(4)

>>> a

<tf.Tensor 'Const:0' shape=() dtype=int32>

>>> a=tf.constant(10)

>>> a

<tf.Tensor 'Const_1:0' shape=() dtype=int32>

重点讲一讲这个shape ,name和类型基本上都是确定的一般也不会弄错。

shape

在进行各种op计算的时候都要主要前后的shape是不是相匹配的,如果代码写错了shape不匹配直接报错,这个在日常代码里经常会遇见。

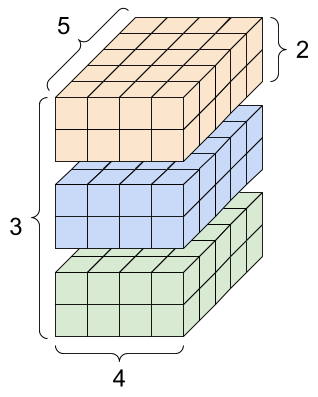

rank_4_tensor = tf.zeros([3, 2, 4, 5])

对于 张量的索引跟矩阵的索引方式都是一样的

- 索引从

0开始编制 - 负索引表示按倒序编制索引

- 冒号

:用于切片start:stop:step

无论是一维或者多维索引都是可以的。

reshape

一般来说,[tf.reshape](<https://www.tensorflow.org/api_docs/python/tf/reshape?hl=zh-cn>) 唯一合理的用途是用于合并或拆分相邻轴(或添加/移除 1)。

备注:不要随意reshape否则会导致数据失效,说白了要根据规则来。比如你要交换轴建议使用transpose,你reshape可能就会出错

tf.Tensor(

[[[ 0 1 2 3 4]

[ 5 6 7 8 9]]

[[10 11 12 13 14]

[15 16 17 18 19]]

[[20 21 22 23 24]

[25 26 27 28 29]]], shape=(3, 2, 5), dtype=int32)

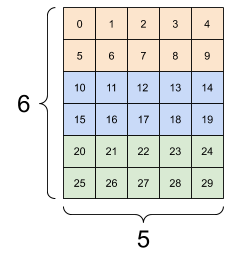

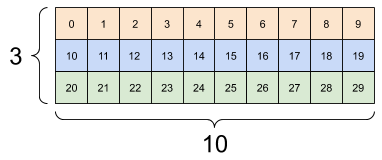

对于 3x2x5 张量,重构为 (3×2)x5 或 3x(2×5) 都合理,因为切片不会混淆:

print(tf.reshape(rank_3_tensor, [3*2, 5]), "\\n")

print(tf.reshape(rank_3_tensor, [3, -1]))

tf.Tensor(

[[ 0 1 2 3 4]

[ 5 6 7 8 9]

[10 11 12 13 14]

[15 16 17 18 19]

[20 21 22 23 24]

[25 26 27 28 29]], shape=(6, 5), dtype=int32)

tf.Tensor(

[[ 0 1 2 3 4 5 6 7 8 9]

[10 11 12 13 14 15 16 17 18 19]

[20 21 22 23 24 25 26 27 28 29]], shape=(3, 10), dtype=int32)

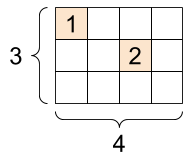

稀疏 Tensor

# Sparse tensors store values by index in a memory-efficient manner

sparse_tensor = tf.sparse.SparseTensor(indices=[[0, 0], [1, 2]],

values=[1, 2],

dense_shape=[3, 4])

print(sparse_tensor, "\\n")

# We can convert sparse tensors to dense

print(tf.sparse.to_dense(sparse_tensor))

SparseTensor(indices=tf.Tensor(

[[0 0]

[1 2]], shape=(2, 2), dtype=int64), values=tf.Tensor([1 2], shape=(2,), dtype=int32), dense_shape=tf.Tensor([3 4], shape=(2,), dtype=int64))

tf.Tensor(

[[1 0 0 0]

[0 0 2 0]

[0 0 0 0]], shape=(3, 4), dtype=int32)

关于张量的操作

tf.ones_like

tf.add

tf.string_to_number

.....

补说一个 broadcast 这个特性,很有用的常见的加减乘除是支持的

变量的定义

Variable 与 get_variable 之间的区别

import tensorflow as tf

with tf.variable_scope("one"):

a = tf.get_variable("v", [1]) #a.name == "one/v:0"

print(a)

# with tf.variable_scope("one"):

# b = tf.get_variable("v", [1]) #ValueError: Variable one/v already exists

with tf.variable_scope("one", reuse = True):

c = tf.get_variable("v", [1]) #c.name == "one/v:0"

print(c)

with tf.variable_scope("two"):

d = tf.get_variable("v", [1]) #d.name == "two/v:0"

print(d)

e = tf.Variable(1, name = "v", expected_shape = [1]) #e.name == "two/v_1:0"

print(e)

assert(a is c) #Assertion is true, they refer to the same object.

assert(a is d) #AssertionError: they are different objects

assert(d is e) #AssertionError: they are different objects

从上面的例子可以看出 get_variable 会去查当前的图中是否存在同名的 变量,相比而言variable 会一直不断的向图中加入新的变量而不做任何的检查,反正TensorFlow会自动给他们的name加上N这样的标识。但是在日常的代码过程中还是建议使用get_variable 这个方法,你会发现后续做一些事情会比较容易。

<tf.Variable 'one/v:0' shape=(1,) dtype=float32_ref>

<tf.Variable 'one/v:0' shape=(1,) dtype=float32_ref>

<tf.Variable 'two/v:0' shape=(1,) dtype=float32_ref>

<tf.Variable 'two/v_1:0' shape=() dtype=int32_ref>

Traceback (most recent call last):

File "f:/vivocode/tfwork/1_1.py", line 17, in <module>

assert(a is d) #AssertionError: they are different objects

AssertionError

name_scope 与 variable_scope

下面的例子你会发现 get_variable 自动过滤 name_scope 但是 Variable 不会

name_scope 只能限制 OP

with tf.name_scope("my_scope"):

v1 = tf.get_variable("var1", [1], dtype=tf.float32)

v2 = tf.Variable(1, name="var2", dtype=tf.float32)

a = tf.add(v1, v2)

print(v1.name) # var1:0

print(v2.name) # my_scope/var2:0

print(a.name) # my_scope/Add:0

如果你想要get_variable 也有相同的作用效果那么你就得使用variable scope了

with tf.variable_scope("my_scope"):

v1 = tf.get_variable("var1", [1], dtype=tf.float32)

v2 = tf.Variable(1, name="var2", dtype=tf.float32)

a = tf.add(v1, v2)

print(v1.name) # my_scope/var1:0

print(v2.name) # my_scope/var2:0

print(a.name) # my_scope/Add:0

使用命名空间个人觉得好处就是实现变量的共享,配合 reuse=True

with tf.name_scope("foo"):

with tf.variable_scope("var_scope"):

v = tf.get_variable("var", [1])

with tf.name_scope("bar"):

with tf.variable_scope("var_scope", reuse=True):

v1 = tf.get_variable("var", [1])

assert v1 == v

print(v.name) # var_scope/var:0

print(v1.name) # var_scope/var:0

关于参数共享

with tf.compat.v1.variable_scope('sparse_autoreuse', reuse=tf.compat.v1.AUTO_REUSE):

'''

定义各种参数,要求它们的name 是一致的'''

pass

参数共享的好处

- 不需要重复创建参数

- 节约存储空间

- 特征之间的约束?

Q:查阅常见的张量运算 API 比如 ones_like 之类的方法